Why Most Content Automation Fails Before Publishing: A Practitioner's Diagnosis

Content automation fails in the gap between generation and publication, a zone most automation guides ignore entirely. This diagnostic guide covers where automated content goes to die, the technical breakpoints that kill workflows, and how to build failure-resistant pipelines that actually ship.

Why Most Content Automation Fails Before Publishing: A Practitioner's Diagnosis

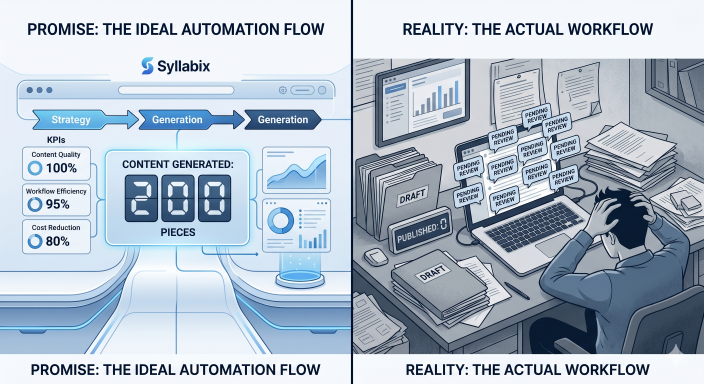

You set up the perfect content automation workflow. AI generates ideas, templates, and formats everything consistently, and integrations promise to handle publishing. Three months later, you have 47 pieces of "almost-ready" content sitting in various stages of your pipeline, and exactly zero published posts.

This isn't a tool problem. It's a workflow design problem that kills most content automation before it produces a single published piece.

The pattern repeats across industries: education companies automate course content creation but can't maintain quality standards. SaaS teams build elaborate content pipelines that break at the first API timeout. Service businesses generate endless drafts that never make it past internal review.

The failure happens in the gap between generation and publication—a zone most automation guides ignore entirely.

The Pre-Publishing Graveyard: Where Automated Content Goes to Die

Content automation fails in predictable places. Understanding these failure points prevents the common trap of building elaborate generation systems that never actually publish anything.

The Review Bottleneck

Automated content lands in human review queues where it dies slowly. The content sits "pending approval" because no one owns the decision to publish. Teams create elaborate approval workflows that become approval paralysis.

A language learning company automated quiz generation, but required three-person approval for each quiz. The automation generated 200 quizzes monthly. The approval process handled 12. The bottleneck wasn't generation capacity—it was decision-making bandwidth.

The "Almost Perfect" Trap

Automated content often needs "just a few tweaks" before publishing. These minor edits accumulate into major revision projects. The content enters an endless refinement cycle where each review reveals new issues.

An exam prep business automates practice test creation. Every generated test needed "small adjustments" to match their style guide. The adjustment process took longer than manual creation. The automation became a content generation tool that required full manual rewriting.

Format Degradation

Content loses formatting integrity as it moves through automation stages. Rich text becomes plain text. Images disappear. Links break. The final output requires complete reformatting before publication.

A training company automated course material creation from source documents. The automation worked perfectly until the publishing stage, where formatted content became unreadable text blocks. Every piece required manual formatting reconstruction.

Context Loss

Automated content loses essential context as it moves between systems. The original intent, target audience, and strategic purpose get stripped away. Reviewers can't evaluate content without understanding its purpose.

Technical Breakpoints That Kill Content Workflows

Technical failures stop content automation at predictable points. These aren't random glitches—they're systematic weaknesses in how automation tools handle real-world publishing requirements.

API Timeout Cascades

Content automation chains multiple API calls together. When one API times out, the entire workflow fails. Most automation platforms don't handle partial failures gracefully.

A content marketing team built a workflow that pulled data from three sources, generated content, and published to two platforms. Any single API timeout killed the entire batch. A 30-second delay in one service meant losing hours of generated content.

Integration Version Conflicts

Automation tools update frequently. Each update can break existing integrations. A workflow that worked perfectly last month fails completely after a platform update.

An online course provider automated lesson creation using five different tools. A single tool update changed their API response format. The entire automation stopped working. Fixing the integration required rebuilding the workflow from scratch.

Data Format Mismatches

Automated content moves between systems with different data requirements. Rich text editors expect HTML. Social platforms want plain text. Email systems need specific formatting. Content gets corrupted in translation.

A newsletter automation pulled content from a CMS and sent it via email. The CMS exported HTML with custom CSS classes. The email platform stripped the formatting. Every newsletter required manual reformatting before sending.

Rate Limiting Failures

Publishing platforms limit how quickly you can post content. Automation workflows often ignore these limits and fail when they hit rate restrictions.

A social media automation tried to publish 50 posts simultaneously. The platform's rate limit allowed 10 posts per hour. The automation failed completely instead of queuing the remaining posts.

Authentication Expiration

Automated workflows rely on authentication tokens that expire. When tokens expire, the automation fails silently. Content gets generated but never published.

The Human Handoff Problem Nobody Talks About

Automation fails most often where it meets human processes. These handoff points require careful design, but most teams treat them as afterthoughts.

Unclear Ownership

Automated workflows create content without clear ownership. When the automation produces something questionable, no one knows who should fix it. The content sits in limbo while teams debate responsibility.

A software company automated blog post creation from product updates. The automation worked, but no one made the decision to publish. Marketing thought engineering should approve technical accuracy. Engineering thought marketing should handle publishing decisions. Posts accumulated unpublished.

Context Switching Costs

Humans need context to evaluate automated content effectively. Switching between reviewing automated content and other work creates cognitive overhead that slows the entire process.

A training company automated quiz creation but required human review for accuracy. Reviewers needed 15 minutes to understand each quiz's context before they could evaluate it effectively. The context switching made the review slower than manual creation.

Quality Expectation Mismatches

Automated content rarely matches human quality expectations without significant post-processing. Teams underestimate the editing required to make automated content publishable.

An education company automated course outline creation. The automation produced structurally correct outlines that needed extensive rewriting to match their pedagogical standards. The "automation" became a first-draft generator that required full human rewriting.

Feedback Loop Failures

Automated systems don't learn from human feedback without explicit design. When humans fix automated content, those improvements don't flow back to improve future automation.

A content team automated article research but manually rewrote every piece. The manual improvements were never informed of the automation. The system continued producing content that required the same manual fixes repeatedly.

Building Failure-Resistant Content Pipelines

Resilient content automation anticipates failure points and builds recovery mechanisms. These patterns prevent common breakdowns and keep content flowing even when individual components fail.

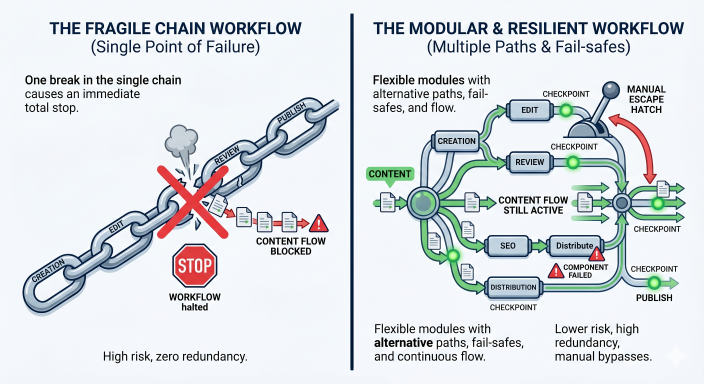

Modular Workflow Design

Break automation into independent modules that can fail without killing the entire workflow. Each module should produce usable output even if subsequent modules fail.

Instead of building one workflow that generates, formats, and publishes content, create three separate workflows. If publishing fails, you still have formatted content ready for manual publishing. If formatting fails, you still have generated content ready for manual formatting.

Clear Failure States

Design explicit failure handling for each automation step. When something breaks, the system should fail clearly with actionable error messages, not silently produce broken output.

A content automation should distinguish between "API temporarily unavailable" (retry later) and "content violates platform guidelines" (requires human review). Different failures need different responses.

Manual Escape Hatches

Every automated step should have a manual alternative. When automation fails, humans should be able to complete the process without rebuilding the entire workflow.

An automated publishing workflow should allow manual publishing with the same formatting and metadata. When the automation breaks, content can still ship on schedule through manual intervention.

Checkpoint Systems

Build review points where humans can evaluate and approve automated work before it continues to the next stage. This prevents bad automated content from propagating through the entire system.

A course creation automation should checkpoint after outline generation, after content creation, and before publishing. Each checkpoint allows human intervention without scrapping previous work.

Graceful Degradation

Design automation to produce simpler output when complex processes fail. A workflow that can't generate perfect content should still generate usable content.

If an automation can't generate formatted blog posts, it should still generate plain text drafts. If it can't generate images, it should still generate text content. Partial success is better than complete failure.

The Minimum Viable Automation Test

Test content automation at a small scale before building complex workflows. This framework validates each component before integration.

Single-Piece Validation

Start by automating one piece of content from start to finish. Don't build batch processing until single-piece automation works reliably.

Automate the creation and publishing of one blog post. Verify every step works correctly. Only after single-post automation succeeds should you attempt multiple posts.

Component Testing

Test each automation component independently before chaining them together. Verify that content generation works before adding formatting. Verify formatting works before adding publishing.

Test your content generation API separately from your publishing API. Ensure each component handles errors gracefully before combining them into a workflow.

Human Handoff Testing

Test every point where automation hands work to humans. Verify that humans can understand and act on automated output without additional context.

Generate automated content and give it to someone unfamiliar with the automation. Can they evaluate and approve it effectively? If not, the handoff needs improvement.

Failure Recovery Testing

Deliberately break each automation component and verify that the system fails gracefully. Test what happens when APIs timeout, when content violates guidelines, and when publishing platforms are unavailable.

Turn off your content API and run the automation. Does it fail clearly with actionable error messages? Can you resume the workflow when the API returns? If not, add better error handling.

Scale Testing

Test automation at your target volume before relying on it for production content. Many automations work for single pieces but fail under load.

If you plan to generate 50 posts monthly, test generating 50 posts in a development environment. Verify that rate limits, API quotas, and processing time work at scale.

Success Metrics

Define specific success criteria before testing. Automation should meet these criteria consistently before production use.

Successful automation might mean: 90% of generated content requires no human editing, 95% of automation runs complete without errors, and the average time from generation to publication is under 2 hours.

Building Content Systems That Actually Ship

Content automation succeeds when it's designed for the entire publishing process, not just content generation. The most sophisticated AI content generator is worthless if it can't reliably publish finished pieces.

Focus on building simple, reliable workflows that handle failure gracefully. Start with minimal automation that works consistently, then add complexity gradually. Most teams try to automate everything at once and end up automating nothing effectively.

The goal isn't perfect automation—it's reliable content publishing with less manual work. Sometimes the best automation is a simple workflow that handles 80% of the process automatically and hands the remaining 20% to humans clearly.

If your content workflow keeps producing drafts but not published assets, the problem is probably not your prompts. It is usually the handoff design, failure handling, or review structure. Fix those first, and automation becomes far more useful.

Contact us to schedule your audit.